Snap Odyssey: Micro Expeditions

Project Overview

Snap Odyssey Micro Expeditions is an Educational Virtual Reality Wildlife Photography Experience where you can learn more about the wonderful world of insects by taking pictures of them on a microscopic safari car. The game was planned to be featured in a public exhibition where 4 players join as a crew for a 3–5 minute semi-procedurally generated insect safari trip. At the end of the experience, players can select their favorite pictures to be sent to their email or social media as souvenirs from this experience. This project is for a class called Designing Games for Museums as one of my electives at New York University which I took in Spring 2020.

The goal of this project is to help the American Museum of Natural History to explore, design, and test educational games for museums.

Background

In Spring 2020, I took a class called Designing Games for Museums as one of my electives at New York University and the client was the American Museum of Natural History. I decided to select one of my personal projects, Snap Odyssey, as a base project that is finally used as an educational interactive experience.

Inspirations

Narrative: Nature documentaries in National Geographic, Discovery Channel, & Animal Planet

Camera Mechanics Gameplay: Pokemon Snap, Beyond Good and Evil, & Fatal Frame

Game Design

Educational

Most games that are designed to be educational first before it’s fun tends to be boring and doesn’t fare well for the general audience. In my approach, I have to make the game mechanics to be fun first before adding educational content in the game. Therefore, it has a solid foundation in making a fun game that the general audience would want to play. In the previous few months, I tested the initial Snap Odyssey Demo with some playtesters & I was quite surprised how people enjoyed taking pictures in VR even though there were no clear game objectives at the time. This is when I realized that the camera mechanic was fun to play and decided to continue delve deeper into the idea.

Educational materials of the game are based on the insects that can be seen in the upcoming Gilder’s Insect Building. I’ve also confirmed with the representative there to make sure the facts that are included are scientifically accurate.

This camera mechanic allows players to take pictures in virtual reality and share them to their social media. The mechanic emphasizes on photography as a creative expression where players can see insects in a different way than before and capture them as moments. These pictures could be shared to the player’s social media, which works as souvenirs that they can show to their friends (and convince them to come & play Snap Odyssey Micro Expeditions!).

Social

Unlike most VR experiences in the present day, this experience is not isolated to one person with the headset. 4 players can see & interact with each other in real time. The game allows you interact with other players synchronously in a shared real time environment. Whether you’re playing with your friends or new people you meet in the exhibition, I like the idea of bringing people together into a virtual experience where they capture moments in photographs together.

In the experience, when a player takes a picture of an insect, a snippet of information about the insect is displayed next to the camera. I designed the experience that every player has different snippet of information about the same specific insect that they photographed. This increases the social agency to share of what they learned to others which engages players to speak of what they’ve just learned to others. Think of it like an emergent study group where a crew of 4 learns about the world of insects. Research shows that small study group is an effective method of learning which I think would be interesting to be implemented in interactive museum exhibitions where it can help curators teach the concepts of the exhibition to the visitors easily.

Replayable

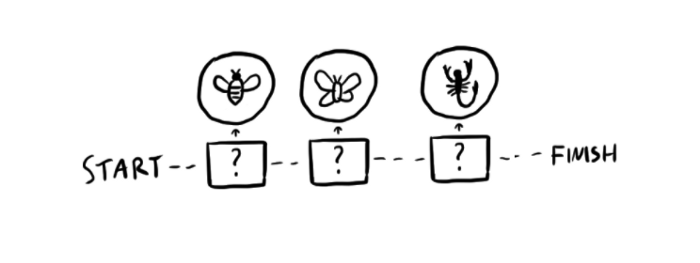

The game utilizes semi-procedural level generation, meaning that every gameplay is not the same. The stages which represent different kinds of insect ecosystem are shuffled randomly every time the game is run.

Currently, one gameplay takes you to 3 randomly selected stages out of 5 possible stages. I believe it’s great to have replay value where the experience can be played multiple times with different generated content. In fact, this can also accommodate the possibility to integrate new levels if there will be new stages in the future as a part of content updates.

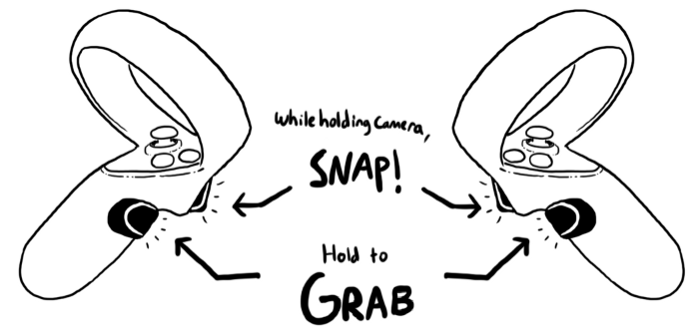

Simple Controls

Players can simply grab the camera by holding the grip button and press the trigger button to take a snapshot of what the camera is seeing. I want to make the experience to be as simple as possible where the learning curve is low for the general audience to understand. In the game, the controls are presented as a virtual “instructions sheet” where users can learn about the controls in the game by simply reading the instructions sheet which I will explain later in the Onboarding Experience section under Interaction Design.

Interaction Design

Virtual Reality is a different medium that works differently compared to traditional web & mobile applications. Due to there are no standards yet in VR, I’ve decided to come up with my own interaction designs based on my own insights & research.

Onboarding Experience

It’s very important to have a clear onboarding experience for new players who just get into the experience. It adds to the challenge that it is a VR experience which is different compared to 2D interfaces as we see in apps for web & mobile devices.

I asked myself “What would you do when you’re looking for answers in life?” and tried to find multiple ways how to present the instructions. People tend to look upwards when they’re looking for answers. I decided to put the controls scheme in front of the player’s field of view in a slightly upward manner at the beginning of the experience. I modified the controls scheme to be white and added some drop shadow for better clarity when being displayed on the sky. In case the user wants to look at the instructions again, it can be found on the car’s dashboard. The instructions are also narrated as an audio guide which compliments the visual experience. I also simplified how to start the experience, when the player holds the camera for the first time, the car starts moving forward.

Locomotion

While the player has 6 degrees of freedom when taking pictures with the camera, the player sits on a safari car as a point of reference. This greatly reduces motion sickness since users can align themselves with the safari car being visible in front of them.

There are several changes that I made from the initial version that I have in the past. Since the experience is played within a fixed time which is 3–5 minutes, we don’t need the feature where you can control the car’s movement. I replaced the buttons with a controls scheme where the players can read it in case they forgot how to interact with the system.

Insect Description

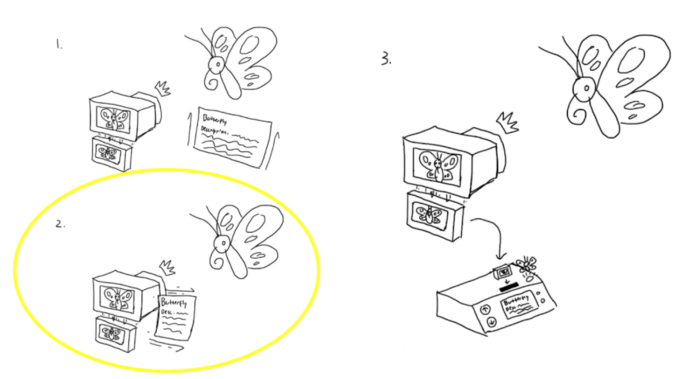

Learning about an insect after taking a picture is the essential educational value of this experience. The big question is, how should I present it effectively? In the initial prototype, the information only shows up on the car’s dashboard which was barely noticeable based on the previous user test. I came up with 3 different interaction designs: 1. Take a picture of an insect, information pops up anchored to the insect’s position 2. Take a picture of an insect, information pops up anchored next to the player’s camera position 3. Take a picture, grab picture, put it into a slot, information and a small hologram of the insect pops up on the dashboard screen

After testing them, I also found several problems that could arise from the designs. Problem with Design 1: If the insect is located far away from the player, it will be difficult to read the insect information. Problem with Design 3: Although this has the most entertaining interaction design, it has a lot of steps to perform a simple task which will be difficult to explain in the onboarding experience & the information on the dashboard will still be barely noticeable. This leaves Design 2 to be the most optimal one for the experience since it’s faster & more reliable compared to the other designs.

Player Avatars

In a shared VR experience, there is a need to differentiate between players to signify which player is who. But at the same time, personal customization is not needed because it’s just a short experience that is generated differently every time the game is run. I decided to use a base avatar head with a variety of fun hats that are randomly generated for each player. Players can easily refer themselves by the hat that the avatar is represented wearing. This is one of the features that I didn’t develop at the time because building a shared experience wasn’t possible and thus the development of this feature wasn’t urgent.

Sound

While virtual reality mainly relies on visuals, sound is an important element in virtual reality experiences. I added an ambient background music that illustrates the players being peacefully lost in the wilderness. At the beginning of the experience ,there is a narrator that tells the players about the narrative behind the experience and the controls to play the game. For the narrator, I used a text-to-voice generator and added a radio distorted filter to make it sounds like it’s transmitted to the car’s radio.

At the beginning of the game design, I’ve considered to add accessibility support for the visually impaired because they can sense the ambience & sounds in the experience. I’ve also planned to add sounds for insects that can make sound which plays when the safari car draws near to a specific insect, something like spatially-aware sound emitting objects. Unfortunately due to the time constraints, I couldn’t implement the feature at the moment.

User Testing

I was very worried that I might not be able to find someone who could test the experience’s interaction design. Fortunately, one of the representatives from AMNH has an Oculus Quest and he could playtest the experience. Please note that there is no one-solution-solves-all solution in interaction design for spatial experiences, this does not mean my solutions also work for different kinds of other VR experiences which is why research is important.

Results

A week before the final presentation, I sent the app build to a representative who have the Oculus Quest. This was also the first time I conducted a remote playtesting session. I found out an unusual solution where the tester logs into Zoom in 2 separate devices. The playtester connects his Quest to a mobile device with Zoom logged in and then streamed to me. Therefore, I can observe & evaluate the video feed of what the tester is doing in real life and what the tester is seeing in the headset.

Though there were some limitations, such as no audio from the Quest since it needs to be disabled to prevent audio echoing, all the features that I had worked great for the tester which I could finalize the app build for the project presentation.

Emerging Behaviors

Other than testing interaction design, I noticed several common emerging behaviors from the previous Snap Odyssey playtests. It’s interesting to see common patterns how people behave when they are interacting with an object. Though these interactions are not planned by design, I love seeing players having fun interacting with the camera. What would you do when you play this experience?

Taking pictures simultaneously

Stacking pictures

Taking selfies

Limitations

Due to time constraints & resources limitation, here were several features that I couldn’t do during the 3 month length development of the project: Networking for multiplayer real-time shared experience configurations & player avatars

- Networking for multiplayer real-time shared experience configurations & player avatars

- E-mail & share photo feature, audio responsive objects, different players get different information feature

- Do more user testing when the pandemic ends (hopefully.)

- Assets polishing: 3D model animations

- Spatial audio for accessibility

Not to mention that I’m working on this project on my own (because I am lonely) and it’s a bit difficult to juggle everything on my own. I have limited 3D modeling skills, which is why I used Google’s models from Google Poly that has Creative Commons license. To add insult to injury, the pandemic happened and everything related to user testing and presenting the project on an exhibition turned into a mess. The experience were planned to be a shared multiplayer experience, but had to be scaled down to a single player experience due to the constraints.

Conclusion

I’m glad what I did for the project and the app build worked perfectly. There were features that didn’t get developed due to restrictions due to limited time & resources. The presentation went well and I got an A for the class but I was really disappointed that I didn’t get the chance to present the experience to the public and the project got suspended because of the pandemic. Though it’s suspended for now, I believe this experience could be relaunched again in the future someday when the pandemic is over.

Future Plans

The only thing that I could do for this is to wait until the pandemic is managed a lot better in the future. Due to the pandemic, most of the operations are suspended in AMNH until further notice. This experience was supposed to be shown as one of the first interactive experiences that visitors can play in the upcoming Gilder Insect Center.

This also impacted me personally which confirms that I can’t do any local shared experience VR experiments in the near future. The pandemic made it difficult to perform user testing and made people hesitant to use VR headsets in public.

Credits

Special thanks to Matt Parker for teaching the class at the NYU Game Center as well as Debra Everett-Lane & Brett Peterson for being supportive representatives from the American Museum of Natural History.

Read More